iStock

Part of the Wondering series

A series of random questions answered by Harvard experts.

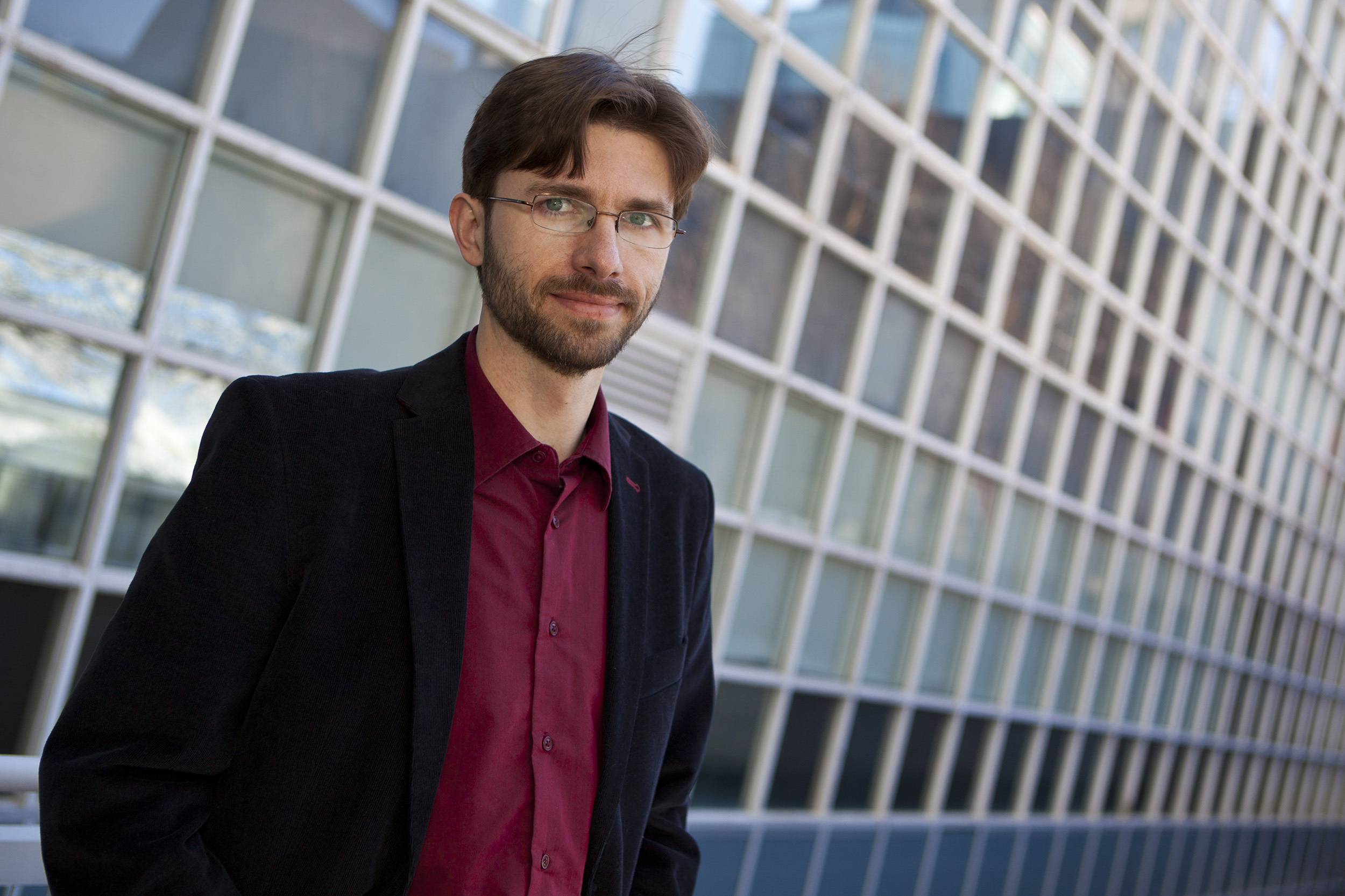

For the first installment, we asked Krzysztof Gajos, Gordon McKay Professor of Computer Science, to tell us when a robot will write a novel.

From the perspective of someone somewhat familiar with the state of the art in machine learning, my answer is that AI may be able to write trashy novels as soon as next year, but it will not write a true novel in the foreseeable future.

What I mean by this is that the AI tools that we have developed are very good at manipulating surface levels of representation. For example, AI is good at manipulating musical notes without being capable of coming up with a musical joke or having any intention of engaging in a particular conversation with audiences. And AI may produce visually appealing artifacts, again, without any high-level intent behind such an artifact.

“We have created a grand con,” writes Krzysztof Gajos. “And potentially a dangerous one, because we are convincing the rest of the society that technology can do things that it actually cannot do.”

Eliza Grinnell/Harvard SEAS

In terms of words, AI is very good at manipulating the language without understanding or manipulating the meaning. When it comes to novels, there are some genres that are formulaic, such as certain kinds of unambitious science fiction that have very predictable narrative arcs, and particular components of world-building and character-building, and very well understood kinds of tension. And we now have AI models that are capable of stringing tens of sentences together that are coherent. This is a big advance because until recently, we could only write one or two sentences at the time, and the moment we got to 10 sentences, the 10th sentence had nothing to do with the first one. Now, we can automatically generate much larger pieces of prose that hold together. So it would likely be possible to write a trashy novel that has a particular form where those components are automatically generated in such a way that it feels like a real novel with a real plot.

When it comes to more sophisticated works, I think the point I would make is that fiction is an extremely effective and important part of our contemporary discourse, because it allows us to set aside our allegiances, and it allows us to suspend disbelief and to step into the shoes of somebody with a very different perspective — and to be really open to that perspective. We are able to imagine other people’s lives, we are able to imagine alternative ways of interacting with other people, and we’re able to consider them in a really open-minded way.

It’s important to note that I’m relying on my expert perspectives when I talk about computer science, and my personal perspectives when I talk about my experience as a reader. But from the perspective of a reader, I don’t think we will have a robot that is able to engage with and manipulate these kinds of meanings.

As a reader, I find that with gripping fiction, it’s not just the content of the book, it’s not just the plot, it’s not just the issues that engage me. It’s also the fact that I’m engaging with another human who wants me to reimagine the world. It’s part of a discourse.

Another point I would make around the technology is that in recent years researchers have made the distinction between manipulating the language versus manipulating the meaning, and they point out that through tools that expertly manipulate the language we’ve created an illusion that machines can understand and manipulate meaning. But that’s absolutely not the case. We have created a grand con. And potentially a dangerous one, because we are convincing the rest of the society that technology can do things that it actually cannot do.

— As told to Colleen Walsh, Harvard Staff Writer