Robots move into operating room

Robots made the surgical team last year, providing remarkably tremor-free and precise hands for surgeons. They also offer the benefit of smaller incisions and shorter recovery times. But these high-tech devices, which the U.S. Food and Drug Administration approved for use in minimally invasive gallbladder and gastroesophageal reflux disease surgery, haven’t made a surgeon’s job that much easier – or quicker. That’s because they are not easy to maneuver, and it’s hard for the surgeon to see more than a very small area at once.

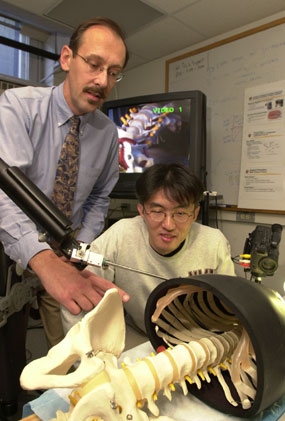

Making the next generation of robots better surgical assistants is the goal of Robert D. Howe, Gordon McKay Professor of Engineering in the Division of Engineering and Applied Sciences, and David Torchiana, Harvard Medical School associate professor of surgery and chief of cardiac surgery at Massachusetts General Hospital. Torchiana, who is the principal investigator of a multicenter clinical trial of robotic-assisted heart surgery, and Howe are devising a way for a robot to direct a surgeon to structures in the body more efficiently. Their innovation could have applications in other types of surgery in addition to heart operations, and could lead to the development of surgical procedures that are not even imaginable today, Howe says.

For his clinical study, Torchiana uses Zeus, the surgical robot system made by Computer Motion of California, in routine coronary bypass operations. The trick is to find and free up the mammary artery inside the chest wall, which Torchiana will graft on to the heart to restore blood supply to the muscle.

To perform the procedure, Torchiana sits at a console and views the greatly magnified portions of the chest cavity on a monitor while manipulating a controller that directs robotic arms to perform the actual surgery. Attached to these arms are long narrow rods, which are inserted through small incisions in the patient’s chest. On one rod is a video camera, which transmits pictures of the chest cavity to the monitor. As he watches the screen, Torchiana guides the other arms on which miniature surgical instruments are mounted to the blood vessel.

The artery he is looking for is as thin as spaghetti – about one-eighth of an inch in diameter – and extends from the collarbone to the bottom of the rib cage. “It’s a very small, delicate structure,” Torchiana says. The confined opening of the chest cavity is what makes manipulating the tools difficult. He is further hampered by how much of the artery he can see. He must move along the artery a tiny section at a time, like a hiker following a heavily wooded trail from tree to tree without knowing the path’s exact course and difficulty. Torchiana needs to dissect from 4 to 8 inches of the vessel. This portion of the procedure takes at least twice the amount of time that it would in the traditional open heart method, which is why Torchiana started thinking about alternatives.

Torchiana approached Howe about these constraints at a meeting sponsored by the Center for the Integration of Medicine and Innovative Technology (CIMIT), a consortium of physicians and engineers at Harvard, Massachusetts Institute of Technology, Draper Laboratories, and Boston-area hospitals. Howe had just made a presentation on his robotics research to the group.

The two researchers decided to tackle the problem of visibility first. Their solution combines the use of CT scans with virtual fixtures to provide the robot with landmarks in the patient’s chest. “The procedure requires us to integrate medical images with the robot controller, which has been a challenge,” Howe says. “That’s one we got working in the last month or two.” Howe and Torchiana began testing an image-guided robotic device in September. Their research is supported by the National Science Foundation and CIMIT.

In a laboratory study, small metal pins are inserted into the skin on the chest of an experimental animal. A CT scan of the subject records the placement of the pins. During the operation, when Torchiana touches the pins with the tip of the robotic-mounted instrument, the robot will “know” the location of the artery relative to the pins based on the CT image stored in the computer. The robot can then lead Torchiana to the artery.

The idea behind the system, Howe says, is similar to the computer mouse’s snap-to-grid feature that makes positioning of the cursor more precise. “The first steps are extremely encouraging. I expect within a decade that robots will be an everyday part of surgical practice,” Howe says.

The two researchers anticipate that what they’re calling “virtual fixturing” could be used to determine the location of other structures in the body. The concept they are developing “is enormously important for robotic surgery,” Torchiana says. “Any kind of operation that requires micromanipulation is going to be a natural for robotics with image guidance.” He names neurosurgery as one potential application.

Howe and Torchiana eventually would like to skip the imaging step (the CT scan). Instead, the robotic device would use a tactile sensor – also developed by Howe – that could feel the pulse of the artery and guide the surgeon to the correct location.

However, robotics will not eliminate the need for a heart surgeon altogether. “The sewing machine does a robotic function for a seamstress,” Torchiana says. “But the person still has to put the garment together. It’s more a tool than an autonomous device.”