Kempner researchers harness generative AI to reveal what neurons ‘want’

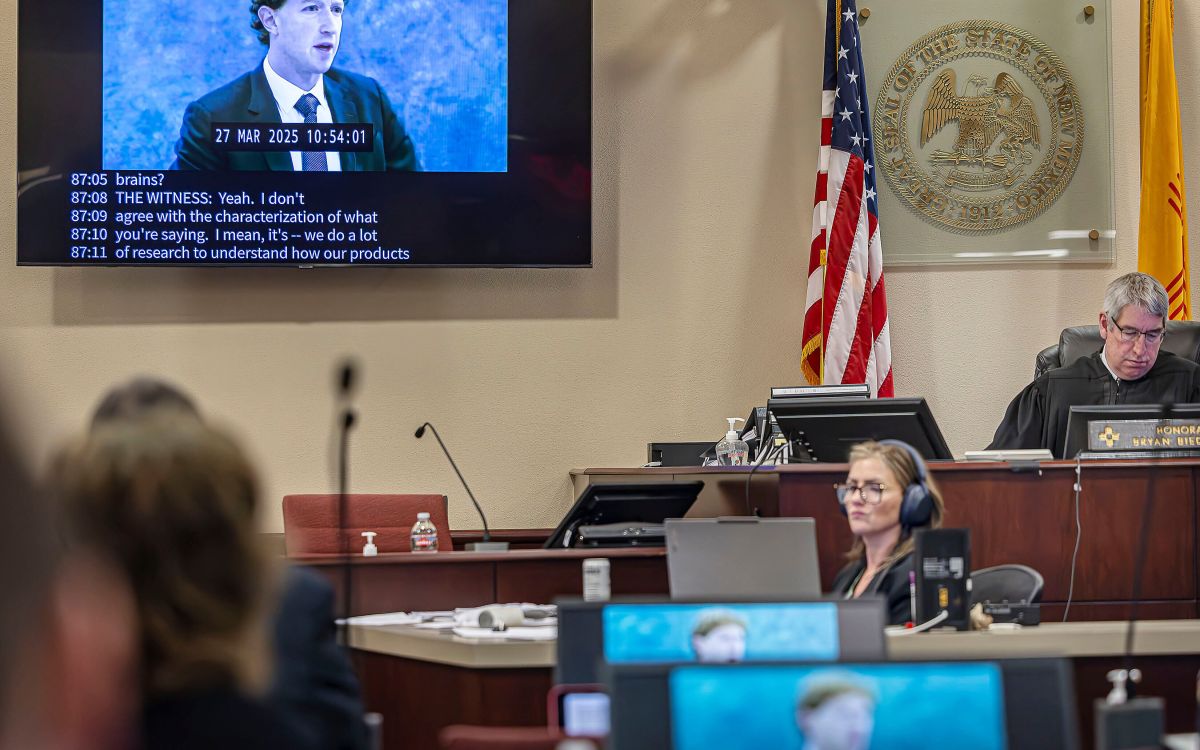

Binxu Wang (right) and her former Ph.D. adviser and current collaborator Carlos Ponce use generative AI to let neurons dictate the properties of test images, revealing unforeseen patterns in the neurons’ preferences.

Photo by Kris Brewer

How can scientists figure out what visual information a neuron really responds to? For decades, neuroscientists have tried to answer this question by showing animals pictures — of faces, trees, houses, and other animals, for example. The ways that neurons in vision-related brain areas respond to these pictures gives researchers clues about the kinds of information that the neurons are processing from the pictures.

While this approach has yielded important discoveries, it also has notable limitations. “When you see a neuron responding to a cat, it’s tempting to call it a ‘cat neuron’ or ‘face neuron,’” says Binxu Wang, a research fellow at the Kempner Institute for the Study of Natural and Artificial Intelligence. “While this can be useful, it can also be a trap.”

The problem, says Wang, is that the test images are chosen by human researchers. Because the researchers rely on their own visual intuitions to choose and interpret images, they may miss the very patterns that neurons in the primate visual system respond to most strongly. In other words, images chosen by humans might not fully reflect the kinds of information that the neurons preferentially respond to.

In a new paper titled “Neuronal tuning aligns dynamically with object and texture manifolds across the visual hierarchy,” just published in Nature Neuroscience, Wang and her former Ph.D. adviser and current collaborator Carlos Ponce, assistant professor of neurobiology at Harvard Medical School and affiliate faculty at the Kempner Institute, present a new approach to this problem. They use generative AI to let the neurons themselves shape the properties of test images, revealing hidden structure in the neurons’ visual preferences.