Is social media responsible for what happens to users?

Parents of children who have died due to alleged social media-related harms hold a vigil on Feb. 5 at the Los Angeles Superior Courthouse, ahead of the landmark social media addiction trial.

Jordan Strauss/AP Content Services for ParentsTogether Action

Landmark suit to examine 1996 law, questions about mental health, other harms, role of website design

A Los Angeles jury will decide whether Meta’s Instagram and Google’s YouTube are addictive and causing harm to teenagers and children — and whether they can be held responsible for it.

The lawsuit involves a 20-year-old California woman who says her compulsive use of Instagram and YouTube since childhood resulted in mental health struggles. She argues the platforms are intentionally designed to be addictive in order to boost user engagement.

A 1996 law protects online platforms from liability from content posted by users.

Meta and Google have denied that their popular services pose mental health dangers to young people. But a growing body of research, along with real-life observations of parents and teachers, suggests otherwise.

Glenn Cohen is deputy dean and the James A. Attwood and Leslie Williams Professor of Law at Harvard Law School, and faculty director at the Petrie-Flom Center for Health Law Policy, Biotechnology & Bioethics.

In this edited conversation, Cohen explains why the Los Angeles trial, which began Feb. 9, is testing tech’s insulation from liability and may reshape the public’s relationship with social media.

Many are calling this a landmark trial. Do you share that view?

This is a case that initially was against Meta, Google, TikTok, and Snapchat. It’s currently against Meta and Google; Snapchat and TikTok settled out of court. It’s a so-called bellwether trial.

There are about 1,600 cases that are in multidistrict litigation, which is a procedure for consolidating cases for some parts of the adjudication process. This is the first, but there’s going to be about two dozen bellwether trials. And these cases are selected as representative litigation for the larger pool of litigants.

I think it’s going to be quite an important case in part because it’s a theory that has a serious consequence for Meta and Google should they lose. They tried to get the case resolved in an earlier stage of the proceedings. When the court refused to grant them that resolution in November, that’s when Snapchat and TikTok decided to settle and not to take their chances at trial.

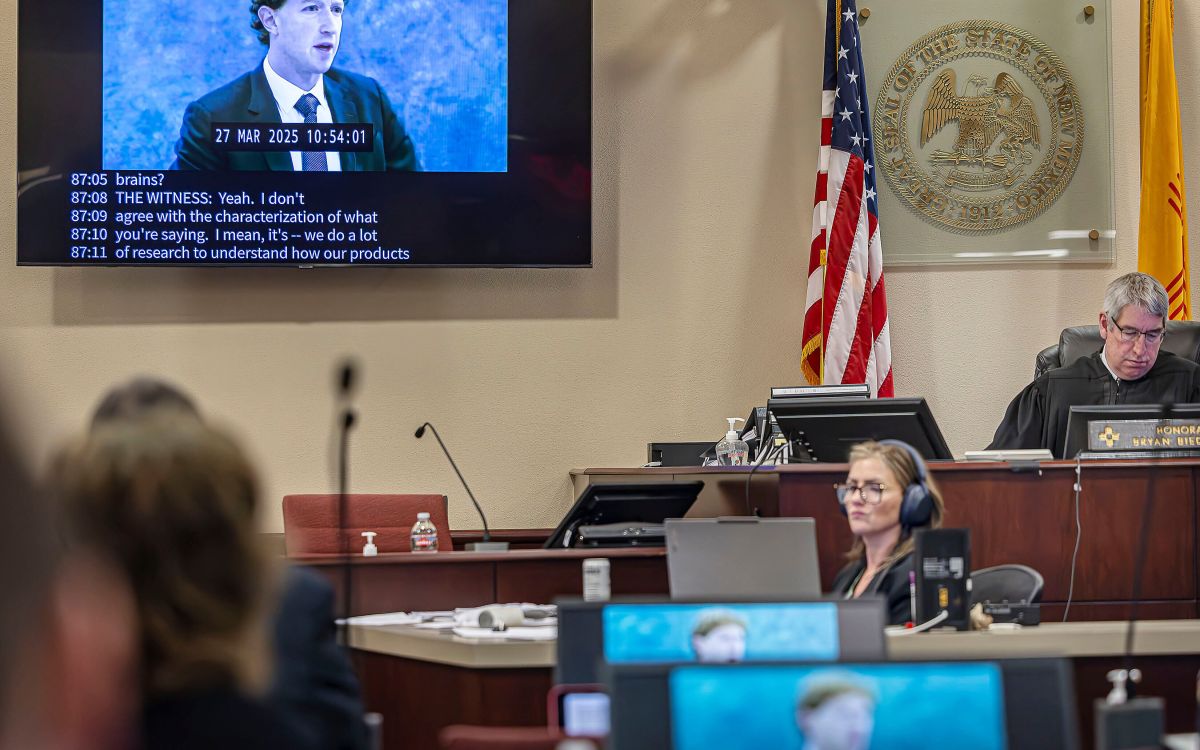

Meta and Google are risking a lot. This is one of the very first cases where we saw Meta CEO Mark Zuckerberg take the stand, and so this is a pretty unprecedented sort of thing.

Meta and Google are alleged to have deliberately designed their products to be addictive to teenagers and younger children. What does the plaintiff have to prove?

Her claim is that she became addicted to these sites as a child, and now experiences anxiety, depression, and body image issues as a result.

The opening statement by her lawyer, Mark Lanier, said things like it’s addictive to the brains of children and that Meta engineered addiction in children’s brains. He draws explicit comparisons to tobacco. Tobacco and gambling are the two public health analogues that you’re going to see come up multiple times.

The allegation is that, just as slot machines and cigarettes use particular techniques from behavioral science and neurobiology, the design decisions here were done in a way to maximize the engagement of youth to increase advertising revenue. That’s a main claim the plaintiffs use to frame their case.

One of the most interesting legal questions has to do with Section 230 of the Communications Decency Act. This was a 1996 law that shielded online platforms from liability for third-party content posted on websites.

The issue of whether the Act protects Meta in this case came up at prior stages of this litigation where the court disagreed with Meta. Should Meta lose this case, it is likely to come up on appeal.

Glenn Cohen.

File photo by Niles Singer/Harvard Staff Photographer

Tech firms are involved in lots of litigation. What makes this particular lawsuit so compelling?

What’s novel about this case is that the plaintiffs frame their claim as having nothing to do with content. They claim this is about design and functionality and design aspects like infinite scroll. So, they argue, a court can adjudicate liability for the design without running afoul of either Section 230 or facing First Amendment questions about content regulation and the like.

The trial court has accepted those arguments at this stage of the proceeding. But on appeal, should Meta lose this case, I suspect that ruling will be front and center.

The other thing to look for in the litigation is about causation — that is, for the plaintiffs to show that the harms would have been avoided if Meta had not designed the product in this way, that the health issues arose because of Meta product design.

We’re going to see back-and-forth and jousting about whether it’s the content versus the design that’s causing the damage to this young woman that she alleges.

And I think we’re going to see a bunch of back and forth on what’s called “failure to warn” questions.

The mother of the plaintiff has alleged that she had never seen any of the warnings on the subject. She learned about it after watching “60 Minutes” after her daughter had been using the Meta products. There’s going to be questions about what kinds of warnings were provided, whether they were sufficient, and whether that negates the liability of Meta.

One more thing will be the quality of social science research linking the platform features to the alleged harms to this young woman and to children in general. That’s going to be another pressure point.

Those are probably three or four things that I would keep my eye on that are going to be significant.

There’s a real chance that Meta will lose this case at trial. Some of their best arguments were ones that have been rejected in an earlier decision by the court. Still, those are mostly legal questions that I think will be taken up on appeal.

So, it’s possible that Meta will lose the trial and yet ultimately be vindicated at the end of the day.

What are the strengths and weaknesses of their arguments, in your view?

The plaintiff seems to have a very compelling story to tell, and that often is helpful, especially in front of a jury.

And I think they’ve already gotten some good stuff out of Mr. Zuckerberg on the stand. I don’t think he cratered, but the sound bites they’ve generated have been helpful to the plaintiff and the general mood around this litigation.

So those are two of the things that are strongest for the plaintiff side.

On the other side, there is this issue about content versus design. It’s quite hard to disentangle, and that will be something that Meta will be hitting repeatedly.

And then, this question of causation will come up repeatedly, as well, and whether the plaintiff’s attorneys can demonstrate the relationship between the design features and the injuries the young woman sustained.

I imagine that we’ll see the lawyers argue these issues both in the trial court proceeding and certainly on appeal if they should lose.

Many countries in Europe, Australia, and others now regulate social media, as well as AI. Could this case accelerate regulations either here or abroad?

I think so. The things that are most likely on the table would be much more age verification and age gating.

Zuckerberg, in his testimony, says that phone makers bear more responsibility and having a reliable way to verify a young user’s age without a driver’s license would be, he said, a “very wise and simple way” to do it.

So that will be part of Meta and the other social media companies’ story too: that regulators should be focused on the main place where adolescents and children access social media — their phones — and that phone makers should be regulated. That’s going to be an argument they make to stave off regulation on them.

One of the biggest challenges we’ve seen with a bunch of the cases that have made it to the U.S. Supreme Court is Section 230. And then, on the flip side, the First Amendment and a reluctance to be regulating anything that approaches content.

Now, I do think there are probably congressional bills that could be passed that could protect minors without touching content and potentially running afoul of the First Amendment. But the social media companies have been fairly adept, thus far, in avoiding a lot of regulation.

There’s also an argument that if these trials are successful, some might point to them and say, “We don’t need governmental one-size-fits-all regulations. Tort liability is doing the work here for us.” So, there’s a way in which a win for the plaintiffs might also be an argument against too much top-down regulation.

Apart from the ultimate decision at trial, the trial itself is generating all sorts of sound bites and things that might make regulators more interested. We’ve seen increased interest in regulation outside the U.S.; we’ve seen a little bit within the U.S. with some of the states regulating in this space.

Notwithstanding the fact that social media companies are good at lobbying, there’s a real chance that some of the stuff that will be revealed in the course of these trials may change the average American’s relationship with social media companies.