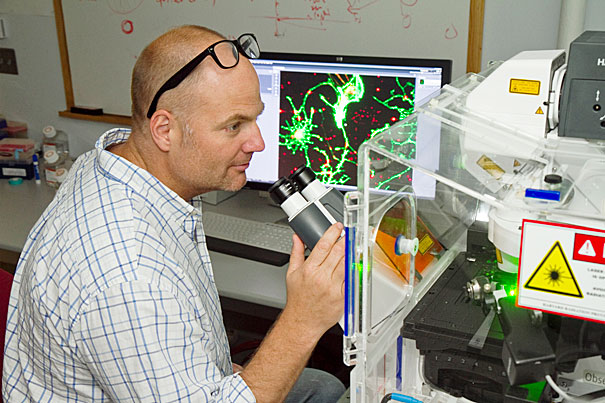

A Harvard research team led by Kevin Kit Parker, a Harvard Stem Cell Institute principal faculty member, has identified a set of 64 crucial parameters by which to judge stem cell-derived cardiac myocytes, making it possible for scientists and pharmaceutical companies to quantitatively judge and compare the value of stem cells.

File photo by Jon Chase/Harvard Staff Photographer

Quality control

Harvard researchers develop long-needed standards for gauging ‘good’ stem cells

After more than a decade of sometimes incremental, sometimes paradigm-shifting advances in stem cell biology, most people with a basic understanding of life sciences know that stem cells are the basic form of cell from which all specialized cells, and eventually organs and body parts, derive.

But what makes a “good” stem cell, one that can reliably be used in developing drugs and for studying disease? Researchers have made enormous strides in understanding the process of cellular reprogramming, and how and why stem cells commit to their adult role. But until now, there have been no standards, no criteria, by which to test these ubiquitous cells for their ability to faithfully adopt characteristics that make them suitable substitutes for patients in drug testing. The need for such quality-control standards becomes ever more critical as industry looks toward manufacturing products and treatments using stem cells.

Now, a research team led by Kevin Kit Parker, a Harvard Stem Cell Institute (HSCI) principal faculty member, has identified a set of 64 crucial parameters from more than 1,000 by which to judge stem cell-derived cardiac myocytes, making it possible for scientists and pharmaceutical companies to quantitatively judge and compare the value of the countless commercially available lines of stem cells.

“We have an entire industry without a single quality-control standard,” said Parker, the Tarr Family Professor of Bioengineering and Applied Physics in Harvard’s School of Engineering and Applied Sciences, and a core member of the Wyss Institute for Biologically Inspired Engineering.

HSCI co-director Doug Melton, who also is co-chair of Harvard’s Department of Stem Cell and Regenerative Biology, called the study “very important.”

“This addresses a critical issue,” Melton said. “It provides a standardized method to test whether differentiated cells, produced from stem cells, have the properties needed to function. This approach provides a standard for the field to move toward reproducible tests for cell function, an important precursor to getting cells into patients or using them for drug screening.”

Parker said that starting in 2009, he and Sean P. Sheehy, a graduate student in Parker’s lab and the first author on a paper just given early online release by the journal Stem Cell Reports, “visited a lot of these companies (commercially producing stem cells), and I’d never seen a dedicated quality-control department, never saw a separate effort for quality control.” Parker explained that many companies seemed to assume that it was sufficient simply to produce beating cardiac cells from stem cells, without asking deeper questions about their functions and quality.

“We put out a call to different companies in 2010 asking for cells to start testing,” he said. “Some we got were so bad we couldn’t even get a baseline curve on them; we couldn’t even do a calibration on them.”

Brock Reeve, executive director of HSCI, said “this kind of work is as essential for HSCI to be leading in as are regenerative biology and medicine, because the faster we can help develop reliable, reproducible standards against which cells can be tested, the faster drugs can be moved into the clinic and the manufacturing process.”

The quality of available human stem cells varied so widely, even within a given batch, that the only way to conduct a scientifically accurate study and establish standards “was to use mouse stem cells,” Parker said, explaining that his group was given mouse cardiac progenitor cells by the company Axiogenesis.

“They gave us two versions of the same cells, embryonic and iPS-derived cardiac myocytes. Neither performed exactly where we wanted them to be, but they were good enough for us to be able to compare them, and use them to start setting parameters,” Parker said.

“We could tell which cells were better, how they contracted, how they expressed certain genes,” Parker explained. “Using the more than 60 measures Sean developed,” on what Parker is calling the Sheehy Index, “we can say ‘these cells are good, and these aren’t as good.’ Prior to this, no one’s had a quantitative definition of what a good stem cell is.

“Everyone has been saying, ‘My cell does this, my cell does that,’ and then you test them, and they’re no good,” Parker said. “I think this shows the field you can say who’s got good cells and who doesn’t. What we have developed is a platform that allows stem cell researchers to compare their stem cells in a standardized and quantitative way. This rubric provides something closer to an apples-to-apples comparison of stem cells from different sources that indicates not only how different they are from one another, but also how far they are from accurately representing the cells they are meant to stand in for.”